Auto-Encoder¶

AutoEncoder, AE¶

Not sure where is the origin Autoencoder-Deep Learning Usage:

- Dimensionality reduction Reducing the dimensionality of data with neural networks (Science 2006)

- Information retrieval via semantic hashing: produce a code that is low-dimensional and binary, then stored in hash table. 1. easily return entries with same binary code and search similar (or less similar) entries efficiently

- Denoising, inpaint task

VAE (ICLR 2014)¶

Auto-encoding variational bayes

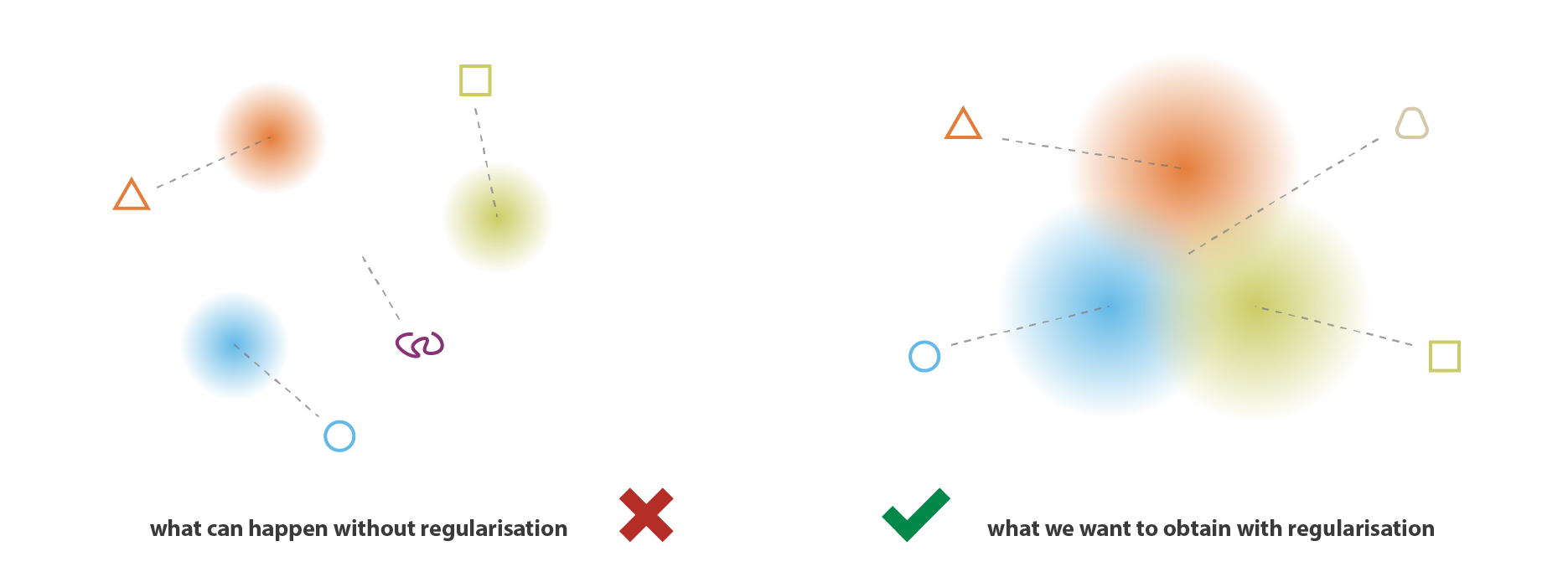

- add divergence loss

- encode mean vector and standard deiation vector, combine them to sampled latent vector regulatisation and generalize via adding noise that follow standard distribution to latent space

\[log p(x) \geq log p(x) - D_{KL}(q(z)||p(z|x)) = E_{z~q} log p(x,z) + H(q)\]

Result:

- improve latent space, could be manipulated

- improve generalization ability

from Understanding Variational Autoencoders (VAEs) - Towards Data Science

from Understanding Variational Autoencoders (VAEs) - Towards Data Science

Disadvantages comparing with GAN:

- blur output

- Not asymptotically consistent unless q is perfect

AVB (2017)¶

Adversarial Variational Bayes: Unifying Variational Autoencoders and Generative Adversarial Networks

VAE-GAN (ICML 2016)¶

Autoencoding beyond pixels using a learned similarity metric

ALAE¶

Adversarial Latent Autoencoders (CVPR 2020)

PyTorch

StyleGAN + latent space reconstruction via VAE (is the concept a bit like UNIT in single domain & loss based on latent space ?)